Not only have machine learning and neural networks catapulted onto the pages of your favorite tech journals, so has the fear of knowing how to efficiently use them in the embedded world. Many potential users believe they have to know a lot about data analytics, machine learning, and neural networks in order to effectively use them. But, that isn’t necessarily the case, much in the same way you don’t have to be an expert in digital signal processing (DSP) in order to implement an FIR filter or DFT.

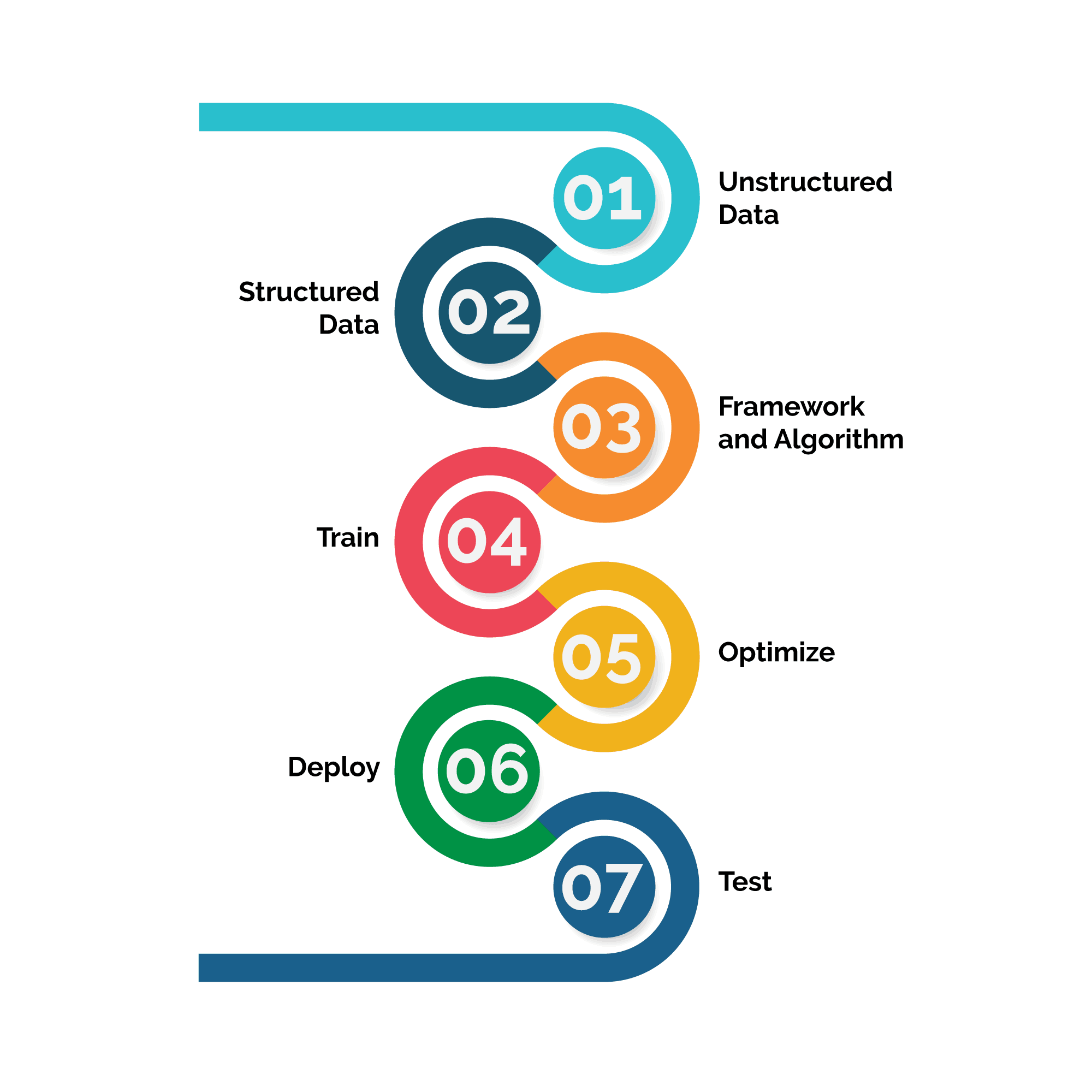

Figure. 1 illustrates a typical process for structuring, training, and deploying a neural network onto an embedded platform. The process begins with identifying an unstructured dataset and ends with testing a deployed neural network.

While it’s possible to start at any point in the process, many teams are at the end of the training phase and are ready to deploy their model to a platform. For example, many teams have finished identifying and structuring datasets and training on them using an existing neural network model (e.g. SSD, Densebox, ResNet, SegNet, FPN, YOLO, GoogleNet, etc.). This is especially true for those developing solutions that will leverage classification, detection, and/or segmentation in video for ADAS applications.

Being at the end of the training phase means you’ve achieved a reasonable inference and have a manifest file(s) that represents your model—number of nodes and layers, types of activation functions, weights, and how everything ties together. But, at this point the main problem that many face is: How do I deploy my trained neural network model onto an embedded platform?

You feel stuck and overwhelmed with how to transition out of the training phase (red bubble in Figure 1) to an embedded platform. Fortunately, there are many solutions.

Three tools listed below can facilitate getting out of the training phase and moving toward deployment. Moreover, these tools are either free and/or open source! DornerWorks’ engineers have knowledge of, experience with, or have received special training on these tools, however these tools are not the only choices available. For more information about these tools and how DornerWorks can help, contact us.

In January 2018, ARM presented CMSIS-NN, efficient kernels developed to maximize the performance and minimize the memory footprint of neural network applications on ARM Cortex-M processors. Once a trained model has been created, it must be optimized (by using scripts provided by ARM) for compatibility with the CMSIS-NN library. Once optimized, the neural network functions in the model are transformed into C callable functions that can be added to application code.

An example can be seen in the video below.

The NXP® eIQ™ machine learning software development environment enables the use of ML algorithms on NXP MCUs, i.MX RT crossover processors, and i.MX family SoCs. The eIQ development environment supports CMSIS-NN as well as ARM NN SDK, TensorFlow Lite, and OpenCV. So, if your neural network development included one of these inference engines, the NXP eIQ solution might be a good fit for you.

The DNNDK solution is exclusively used for porting neural networks to Xilinx devices. The DPU, a hardware platform running on Xilinx FPGAs, is scalable to fit various Xilinx® Zynq®-7000 and Zynq UltraScale+™ MPSoC devices from edge to cloud to meet the requirements of many diverse applications. The DNNDK accepts models from Caffe and/or Tensorflow and maps them into DPU instructions. The DPU is supported with a runtime engine that includes a Linux driver, a loader, a tracer/profiler, and APIs to help facilitate efficient deployment and testing of neural networks.

In summary, forging a path toward deploying neural networks on embedded platforms may seem daunting. But, tools such as ARM CMSIS-NN, NXP eIQ, and Xilinx DNNDK facilitate this effort. To showcase (as shown in the gifs below) how easy it is to get something up and running, DornerWorks leveraged the DNNDK-DPU solutions from Xilinx and the Avnet Ultra96 development board for three use cases: pose detection, face detection, and vehicle detection, as shown in the gifs below.

DNNDK-DPU solutions from Xilinx and the Avnet Ultra96 development board enable pose detection, face detection, and vehicle detection.

Not only can DornerWorks help your team port trained models to an embedded system, we can also help with data analysis, data structuring, model selection, and model training. Python provides scikit-learn and Pandas Data Analysis Library that facilitate analyzing and structuring data for training neural networks in Caffe and/or TensorFlow. Stay tuned for additional blogs regarding machine learning.