In the world of FPGAs, design and verification go hand in hand. When the time comes to create a testbench for a particularly complex module that needs to support multiple functions, it can seem quite daunting — trying to tackle all of these functions at once in a single test can quickly get difficult and confusing, and what’s worse is that a test like this can’t be used until the entire design has been completed.

What if a we find a bug after the design is finished that requires significant backtracking?

What if the full test takes a long time to run when, really, we just want to check a particular part of the test that is related to a small design change?

This can potentially cost a lot of time and makes progress-tracking difficult. A better solution to such a situation is to test modularly. By breaking up our test into pieces so as to verify function-by-function, such a task becomes much more approachable. Progress can be reported more frequently and more accurately, and problems can be detected early on in the design process as opposed to waiting until the it’s finished.

This dovetails nicely into an Agile framework or Test-Driven Development methodology, and where VUnit truly shines.

VUnit is a python-based testbench framework that allows for easier organization, control, and execution of specific testcases for a design. This allows for a designer to tailor separate testcases to target specific functions of their modules and thus verify as they design. VUnit introduces many features that make modular testing a much easier task when compared to standard testbenches. These include:

See the VUnit “Getting Started” guide for an introduction to how a VUnit testbench is structured: https://vunit.github.io/user_guide.html#introduction.

To demonstrate this concept, we’ll use a half-adder module. This half-adder will have the following ports:

entity design_top is port( term_1_in_p : in std_logic; term_2_in_p : in std_logic; sum_out_p : out std_logic; carry_out_p : out std_logic ); end entity design_top;

While one could easily make a testbench that tests all of the functions of this half-adder, let’s pretend that this is a much more complicated module and we want to test these functions one at a time as we implement them. The half-adder could be broken up into two functions:

First let’s design the summing functionality:

architecture rtl of design_top isbegin sum_out_p <= term_1_in_p xor term_2_in_p; end architecture rtl;

Using VUnit we can now add a testcase to our test suite that targets the sum function of our half-adder, like so:

test_runner_setup(runner,runner_cfg); -- simulation starts here if run("sum test, no carry") then write_terms(bfm_control_s,'0','0'); wait for 10 ns; write_terms(bfm_control_s,'1','0'); wait for 10 ns; write_terms(bfm_control_s,'0','1'); wait for 10 ns; end if; test_runner_cleanup(runner); -- simulation ends here

In our testcase “sum test, no carry” we command a self-checking Bus Functional Model (BFM) using the procedure “write_terms(bfm_control_s, [term 1], [term 2]”) to send stimulus term inputs that target the summing part of our module but not the carry-over part, since we have not yet implemented that part of the design. It only sets one bit at a time. The BFM in this case will monitor the outputs and verify them with assertions, or using VUnit’s built-in Checking Library.

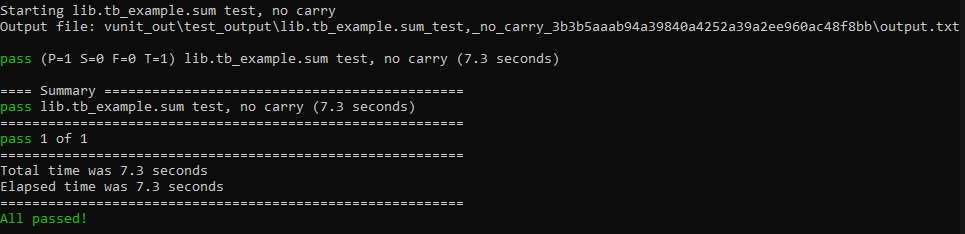

We run our testcases from the command line by executing VUnit’s run.py. This is the central script for a VUnit testbench. It is used to specify paths to the test bench and design source code and supports several convenient flags and arguments to control various aspects of the test, such as which testcases are executed, if they’re launched in GUI mode of the selected simulator, if the test should stop if a failure is detected, and several others. A full list of these arguments can be found in the VUnit documentation. By default, run.py will launch all of the detected testcases in the test suite and display if they passed or failed. The result in our case looks like this:

We see that VUnit runs our testcase “sum test, no carry” and our self-checking BFM did not fail any assertions, so our test has passed. Now we can provide a status update and say that our design supports the summing part of our half-adder. Next, let’s finish our design by implementing the carry-over function of the module:

architecture rtl of design_top is begin sum_out_p <= term_1_in_p xor term_2_in_p; carry_out_p <= term_1_in_p and term_2_in_p; end architecture rtl;

And then we can add an additional testcase to our VUnit test “suite,” like so:

main : process is begin test_runner_setup(runner,runner_cfg); -- simulation starts here if run("sum test, no carry") then write_terms(bfm_control_s,'0','0'); wait for 10 ns; write_terms(bfm_control_s,'1','0'); wait for 10 ns; write_terms(bfm_control_s,'0','1'); wait for 10 ns; elsif run("carry test") then write_terms(bfm_control_s,'1','1');wait for 10 ns; end if;test_runner_cleanup(runner); -- simulation ends here wait; end process;

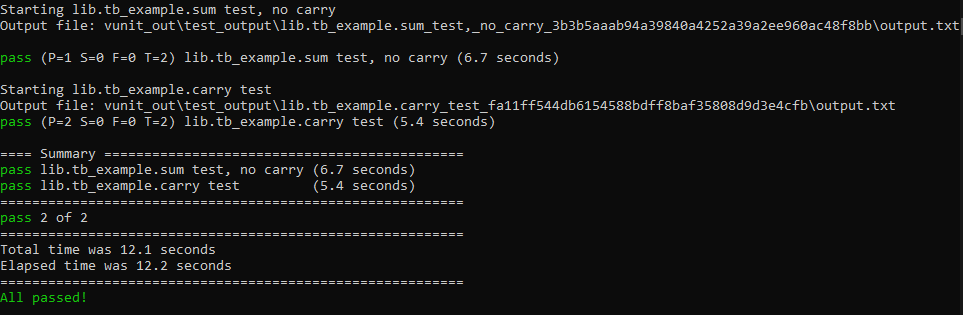

Now we have a testcase “carry test” that will set both term inputs to ‘1’ and verify that the carry-over output from the module goes high. Again, we run our VUnit test suite by executing run.py:

Now we can see that VUnit runs both testcases: “sum test, no carry” and “carry test.” Both testcases pass through our self-checking BFM’s assertions without failures. We can now report that our design supports all required functions.

Suppose that we make changes to the design in the future and we want to verify that a relevant testcase still passes. Perhaps we have started to “upgrade” our design from a half-adder to a full-adder and along the way we would like to verify that our initial carry test still works.

VUnit also allows us to execute specific testcases from our test suite like so:

python run.py "lib.tb_example.carry test"

This way we can verify that something still works without necessarily having to wait for all of the testcases to execute or modify the testbench code itself. VUnit provides this and many other conveniences for controlling the test all from the command line.

Although this is a very simple example, it demonstrates the concept of modular testing and how VUnit can be a powerful tool in the process of bringing up and verifying a large design incrementally by providing more frequent feedback to both the designer and project management.